In my Information Society course this week we talked about the rise to dominance of IBM in the late 1950s and early 1960s, and in particular the role of the IBM System/360 system as a strategy for encouraging what we would today refer to as vendor lock-in. The rapid pace of development in computer hardware in this period meant that customers were frequently upgrading their equipment, and every such moment of decision was the opportunity (or risk, depending on your perspective) for a customer to consider an IBM competitor. By providing an entire line of software compatible System/360 computers, IBM leveraged the socio-technical complexity of a computer installation to its own advantage. That is to say, the fact that a computer installation in this period included not only the central computer unit, but also an increasingly expensive ecosystem of peripherals, application programs, data formats, user interfaces, and human operators and programmers, meant that it was increasingly difficult to change any one component of the system without affecting (or at least considering) its interactions with every other component. By allowing users to reuse their investment in these “softer” elements of the system, which were now compatible across central computer units, IBM assured that they would keep buying IBM hardware.

Thinking about computers in socio-technical terms helps make sense of one of the persistent questions facing both developers and historians of software, namely “why is software so hard?” In theory, computer code, although it might not be easy to write, should be easy to get right. It costs nothing (or at least, next to nothing) to “build” and distribute a software product. If you find a bug, you can fix it, and almost immediately deploy that fix to all of your users. There is no material object or artifact to repair, replace, or dispose of. As an abstraction or an isolated set of computer code, software is infinitely protean, almost ephemeral. As an element in a larger socio-technical system, software is extraordinarily durable.

One of the remarkable implications of all of this is that the software industry, which man consider to be one of the fastest-moving and most innovative industries in the world, is perhaps the industry most constrained by its own history. As one observer recently noted, today there are still more than 240 million lines of computer code written in the programming language COBOL, which was first introduced in 1959.1Michael Swaine. “Is Your Next Language COBOL?” _Dr. Dobbs Journal_ (2008) All of this COBOL code needs to actively maintained, modified, and expanded. I was just reading an article in the most recent issue of the School of Informatics and Computing newsletter highlighting the fabulous new job that one of our 2013 graduates had landed. What was he doing: programming in COBOL.

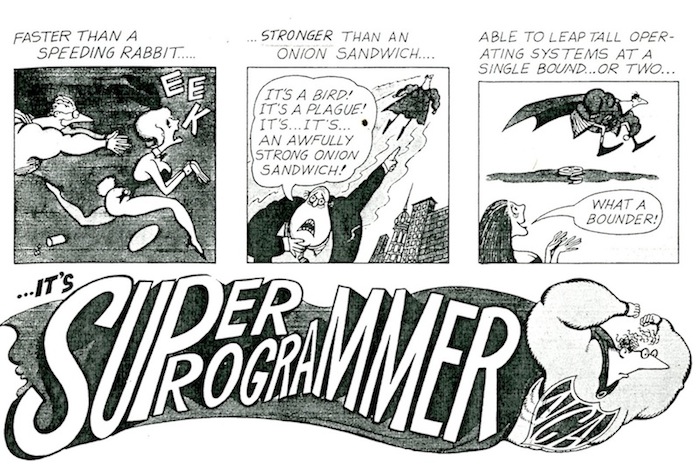

In my notes for my lecture about the emerging “software crisis” of the late 1960s, which was essentially a reflection of the realization by the computer industry that software was indeed, a complex socio-technical system that could not be isolated from its larger environment (and therefore also not easily rationalized, routinized, or automated), I borrowed heavily from a short piece I wrote for the Annals of the History of Computing on software maintenance. The whole concept of software maintenance represents something of a paradox, at least according to the traditional understanding of software as mere computer code. After all, software does not wear out or break down in the traditional sense. Once a software-based system is working, it should work forever — or at least until the underlying hardware breaks down (which is make it somebody else’s problem). Any latent “bugs” that are subsequently revealed in software are considered flaws in the original design or implementation, not the result of the wear-and-tear of daily use, and in theory could be completely eliminated by rigorous development and testing methods. To quote from the article:

But despite the fact that software in theory never breaks down, in most large software projects maintenance represents the single most time consuming and expensive phase of development. Since the early 1960s, software maintenance has been a continuous source of tension between computer programmers and users. In a 1972 article the influential industry analysts Richard Canning argued that the rising cost of software maintenance, which by that time already devoured as much as half or two-third of programming resources, was just the “tip of the maintenance iceberg.” 2 Richard Canning, “The Maintenance ‘Iceberg'” EDP Analyzer, 10(10), 1972, pp. 1-14. Today it is estimated that software maintenance represents between 50% and 70% of all total expenditures on software.3Girish Parikh, “Maintenance: penny wise, program foolish”. In: SIGSOFT Softw. Eng. Notes 10.5 (1985)

So if software is an artifact that in theory, can never be broken, what is software maintenance? Although software does not not wear out or break down in any traditional sense, what does “break” over time is the larger context of use. To borrow a concept from John Law and Donald MacKenzie, software is a heterogenous technology. Unlike computer hardware, which was by definition a tangible “thing” that could readily be isolated, identified, and evaluated (and whose maintenance can be anticipated and accounted for), computer software was inextricably linked to a larger socio-technical system that includes machines (computers and their associated peripherals), people (users, designers, and developers), and processes (the corporate payroll system, for example). Software maintenance is therefore as much a social as a technological endeavor. Most often what needs to be “fixed” is the ongoing negotiation between the expectations of users, the larger context of use and operation, and the features of the software system in question.

If we consider software not as an end-product, or a finished good, but as a heterogeneous system, with both technological and social components, we can understand why the problem of software maintenance was (is) so complex. To begin with, it raises a fundamental question – one that has plagued software developers since the earliest days of electronic computing – namely, what does it mean for software to work properly? The most obvious answer is that it performs as expected, that the behavior of the system conforms to its original design or specification. But only a small percentage of software maintenance is devoted to fixing such bugs in implementation.4David C. Rine. “A short overview of a history of software maintenance: as it pertains to reuse” SIGSOFT Softw. Eng. Notes 16(4), 1991, pp. 60-63

The majority of software maintenance involve what are vaguely referred to in the literature as “enhancements.” These enhancements sometimes involved strictly technical measures – such as implementing performance optimizations – but most often what Richard Canning termed “responses to changes in the business environment.” This included the introduction of new functionality, as dictated by market, organizational, or legislative develops, but also changes in the larger technological or organizational system in which the software was inextricably bound. Software maintenance also incorporated such apparently non-technical tasks as documentation, training, support, and management.5E. Burton Swanson. “The dimensions of maintenance”. In ICSE ’76: Proceedings of the 2nd international conference on Software engineering. IEEE Computer Society Press, 1976, pp.492-497. In the technical literature that emerged in the 1980s, this “adaptive” dimension so dominated the larger problem of maintenance that some observers pushed for the abandonment of the term maintenance altogether. The process of adapting software to change would better be described as “software support”, “software evolution”, or (my personal favorite) “continuation engineering.”6 Girish Parikh. “What is software maintenance really?: what is in a name?”. In: SIGSOFT Softw. Eng. Notes 9(2), 1984, pp. 114-116.

My conclusion in the Annals essay was that we need to re-evaluate the assumption that the history of software is only the history of computer code (or coders). The idea that the computer programmer, as Frederick Brooks famously described it in The Mythical Man-Month, like the poet, “works only slightly removed from pure-thought stuff. He builds his castles in the air, from air, creating by exertion of the imagination,” is both accurate and misleading. To a degree, Brook’s fanciful metaphor is entirely accurate – at least when the programmer is working on constructing a new system. But when charged with maintaining so-called “legacy” system, the programmer is working not with a blank slate, but a palimpsest. Computer code is indeed a kind of writing, and software development a form of literary production.

But the ease with which computer code can be written, modified, and deleted belies the durability of the underlying document. Because software is a tangible record, not only of the intentions of the original designer, but of the social, technological, and organization context in which it was developed, it cannot be easily modified. “We never have a clean slate,” argued Barjne Stroudstroup, the creator of the widely used C++ programming language, “Whatever new we do must make it possible for people to make a transition from old tools and ideas to new.”7Bjarne Stroustrup. “A History of C++,” History of Programming Languages. T.M. Bergin and R.G. Gibson, eds. ACM Press, 1996. In this sense, software is less like a poem and more like a contract, a constitution, or a covenant. Despite the fact that the material costs associated with building software are low (in comparison with traditional, physical systems), the degree to which software is embedded in larger, heterogeneous systems makes starting from scratch almost impossible. Software is history, organization, and social relationships made tangible.

- 1Michael Swaine. “Is Your Next Language COBOL?” _Dr. Dobbs Journal_ (2008)

- 2Richard Canning, “The Maintenance ‘Iceberg'” EDP Analyzer, 10(10), 1972, pp. 1-14.

- 3Girish Parikh, “Maintenance: penny wise, program foolish”. In: SIGSOFT Softw. Eng. Notes 10.5 (1985)

- 4David C. Rine. “A short overview of a history of software maintenance: as it pertains to reuse” SIGSOFT Softw. Eng. Notes 16(4), 1991, pp. 60-63

- 5E. Burton Swanson. “The dimensions of maintenance”. In ICSE ’76: Proceedings of the 2nd international conference on Software engineering. IEEE Computer Society Press, 1976, pp.492-497.

- 6Girish Parikh. “What is software maintenance really?: what is in a name?”. In: SIGSOFT Softw. Eng. Notes 9(2), 1984, pp. 114-116.

- 7Bjarne Stroustrup. “A History of C++,” History of Programming Languages. T.M. Bergin and R.G. Gibson, eds. ACM Press, 1996.

Follow

Follow